Table of Contents

CIP Centralised Testing

The first goal in CIP testing was the creation of the B@D (Board at Desk) virtual machine. This allows developers to easily run automated testing on a local Beaglebone Black or Renesas RZ/G1M iwg20m platform.

The second goal for CIP testing was to centralise testing so that developers can run tests without having local access to a platform. This is becoming more and more useful for CIP as the list of reference platforms grows. It also means that tests can be triggered and run automatically 24/7.

The third goal for CIP testing was to set up Continuous Integration (CI) testing to automatically test CIP software on CIP hardware. See the Continuous Integration testing overview page for more details.

The fourth goal for CIP testing is to integrate with the KernelCI project. This work is underway and the latest results can be viewed at dashboard.kernelci.org.

CIP Testing Architecture

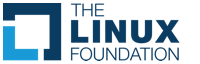

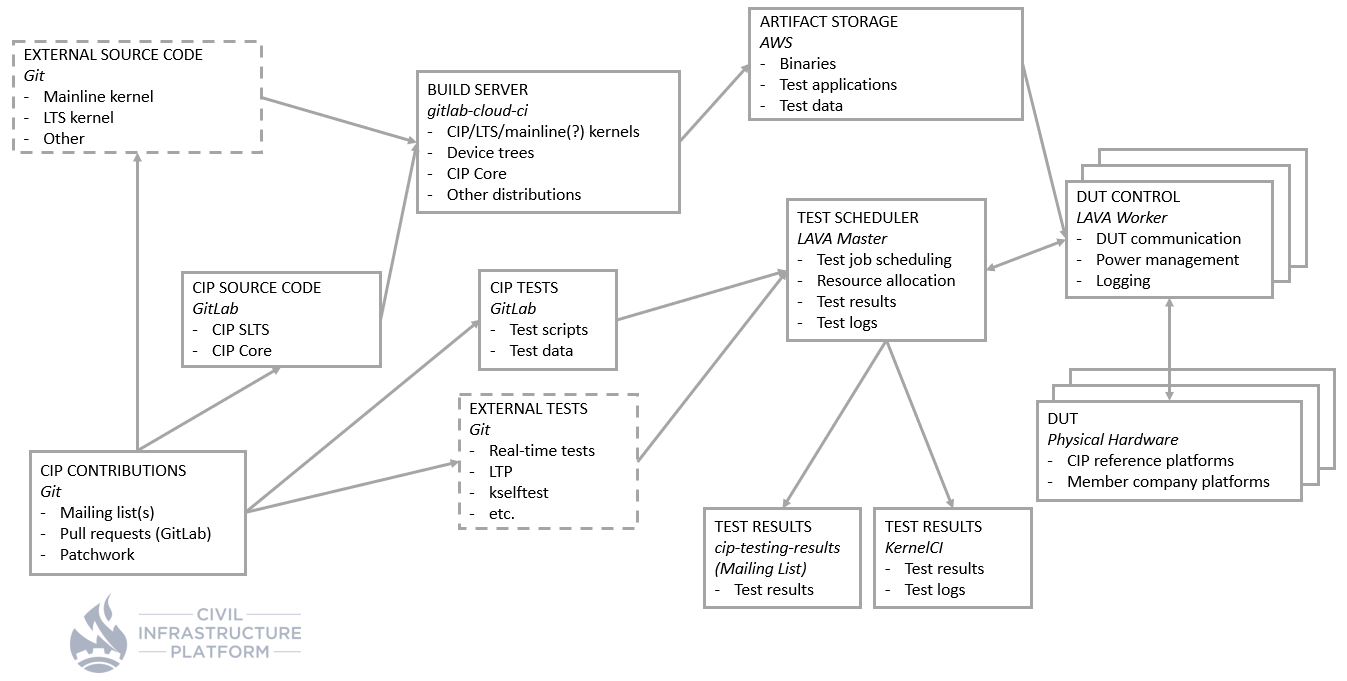

The block diagram below provides an overview of CIP's centralised test infrastructure.

Explanations for each block are below.

Test Scheduler

This schedules submitted test jobs to run on available hardware targets.

CIP has set up their own instance of LAVA (Linaro Automated Validation Architecture). LAVA is a continuous integration system for deploying operating systems onto physical and virtual hardware for running tests. See the LAVA website for more details.

CIP is using a version of LAVA maintained by the KernelCI project which containerises support in a Docker image.

Links

- CIP LAVA master website: https://lava.ciplatform.org

- CIP LAVA documentation: CIP LAVA Wiki

- cip-lava-docker source code: https://gitlab.com/cip-project/cip-testing/lava-docker

- How to submit a LAVA test job: Submitting a LAVA test job

Test scheduler workflow

The following schematic shows the workflow, that describes how test images are built and tested with LAVA.

Links

- CIP Testing group: https://gitlab.com/cip-project/cip-testing

- CIP Kernel group: https://gitlab.com/cip-project/cip-kernel

Target Control

A LAVA worker (or lab) manages target (virtual/physical hardware) control. There can be one or many different LAVA workers.

Currently CIP has three LAVA workers. A summary of all of the attached targets can be viewed on the LAVA master.

CIP encourage as many LAVA workers to be set up as possible and have provided a guide on how to do this.

Links

- lab-cip-cybertrust: https://lava.ciplatform.org/scheduler/worker/lab-cip-cybertrust

- lab-cip-mentor: https://lava.ciplatform.org/scheduler/worker/lab-cip-mentor

- lab-cip-renesas: https://lava.ciplatform.org/scheduler/worker/lab-cip-renesas

Target

A target is the physical (or virtual) platform connected to a LAVA worker.

The aim is to have every CIP reference platform connected to at least one worker. Ideally there should be more than one of each platform so that more hardware configurations can be tested.

Links

- CIP reference hardware: cipreferencehardware

Test Results

There needs to be an easy way to view test results, otherwise there is little point in running tests.

There are three ways to view test results from tests run in the CIP testing infrastructure.

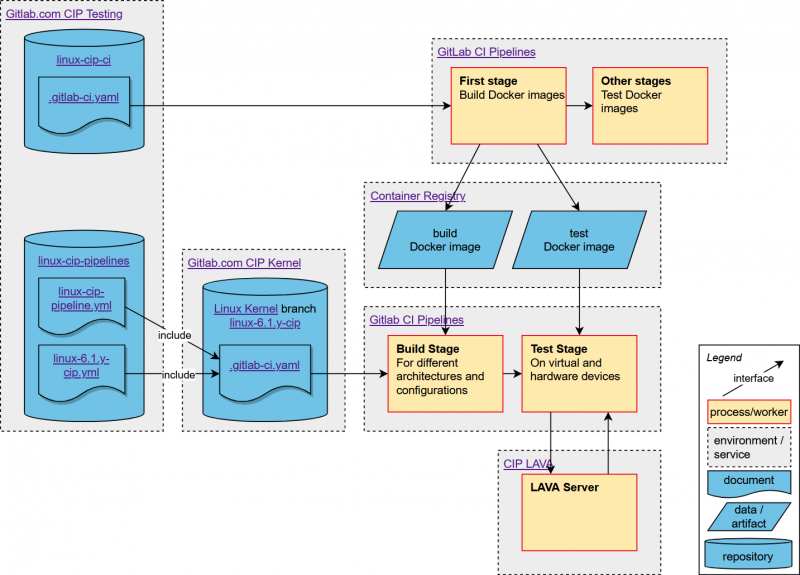

LAVA

LAVA hosts it's own results page which details what test cases have been run, test results, and links to the relevant logs.

KernelCI

KernelCI is a widely used project that aims to unify all upstream Linux kernel testing efforts in order to provide a single place where to store, view, compare and track these results.

CIP plans to submit our test results to kernelci.org so that they can be viewed by all.

Mailing list

The CIP project hosts a mailing list where test results can be reported.

LAVA can also email any email address specified in the job description.

SQUAD

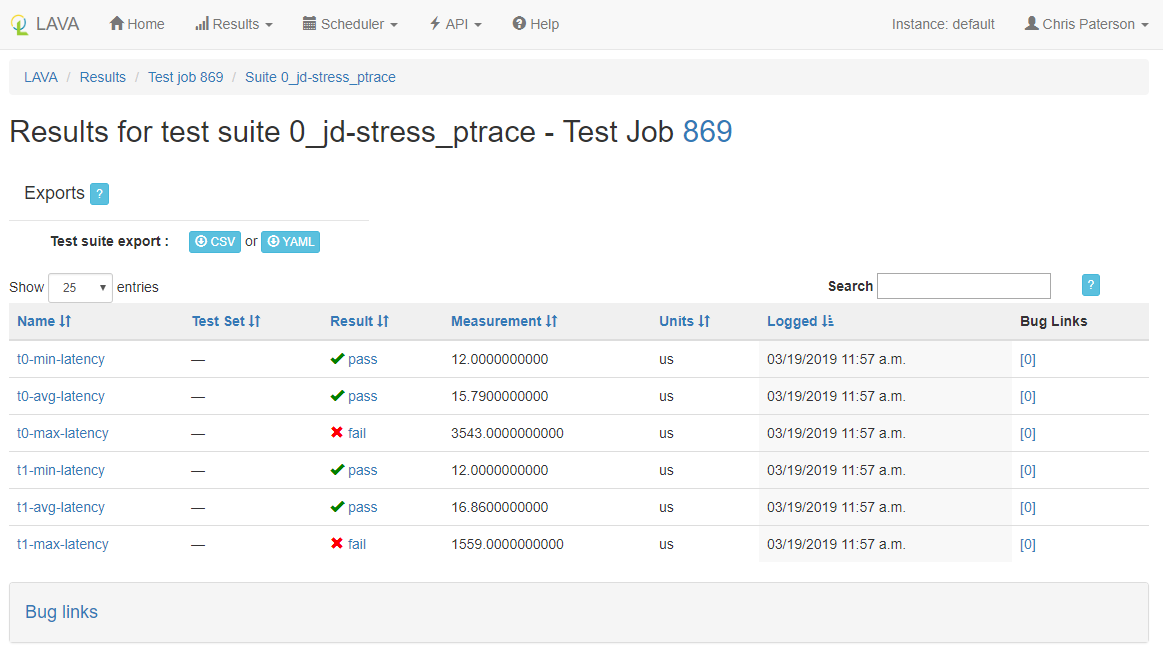

For detailed test reporting CIP uses the Software Quality Dashboard (SQUAD). Build success and test results are reported to the CIP SQUAD.

The following diagram shows how build results are reported to SQUAD and test jobs are submitted to LAVA and monitored by SQUAD.

Links

- CIP LAVA master results page: https://lava.ciplatform.org/results

- CIP KernelCI instance: https://kernelci.ciplatform.org

- CIP test results mailing list: https://lists.cip-project.org/mailman/listinfo/cip-testing-results

- CIP SQUAD Reporting: https://squad.ciplatform.org/

Artifact Storage

A number of different artifacts are required for testing. These may include Kernel, device tree and filesystem binaries, test applications and test data.

These artifacts need to be stored somewhere where all LAVA workers can access.

CIP uses Amazon Web Services Simple Cloud Storage Service (AWS S3).

Links

- CIP artifact storage: http://download.cip-project.org

CIP Tests

Tests created and shared by CIP are stored in a public GitLab repository for all to use.

In addition to individual test cases this repository also provides sample LAVA test job definition yaml files that will work with the CIP LAVA infrastructure.

Links

* CIP Kernel tests repository: https://gitlab.com/cip-project/cip-testing/cip-kernel-tests

Build Server

A key part of any CI/CD setup is the ability to build the software. Builds trigger automatically when a change is made to the software. The new software is then uploaded to the artifact storage and then tested on real hardware in the LAVA infrastructure.

CIP Source Code

CIP hosts a number of software projects including the SLTS Linux Kernels and CIP-Core - our reference filesystem.

All CIP software is publicly available on CIP's GitLab site.

Links

- CIP GitLab home: https://gitlab.com/cip-project

- CIP SLTS Linux Kernels: https://gitlab.com/cip-project/cip-kernel/linux-cip

- CIP Core: https://gitlab.com/cip-project/cip-core

- CIP Testing: https://gitlab.com/cip-project/cip-testing

CIP Contributions

Anyone can submit code to any of the CIP projects by either submitting a pull request the relevant GitLab repository or by submitting patches to the cip-dev mailing list.

Patches that are submitted to the cip-dev mailing list are tracked using patchwork.

Links

- CIP GitLab home: https://gitlab.com/cip-project

- CIP development mailing list: https://lists.cip-project.org/mailman/listinfo/cip-dev

- CIP Patchwork: https://patchwork.kernel.org/project/cip-dev/list

External Tests

CIP doesn't plan to create duplicates of tests that are already published by other projects. Part of our regular testing is to run through a large number of external test cases. For example, ones provided by the LTP project, or by Linaro.

Links

- Linux Test Project: http://linux-test-project.github.io

- Linaro test definitions: https://github.com/Linaro/test-definitions

External Source Code

In many cases CIP needs to reuse code from other projects. For example there is a benefit to build and test the LTS Linux Kernel as this is what the CIP SLTS Kernel is based on. If issues are reported and fixed in LTS pre-releases it will directly improve the stability of the CIP Kernel.

Links

- Mainline Linux Kernel: https://git.kernel.org/pub/scm/linux/kernel/git/torvalds/linux.git

- Stable Kernel repository: https://git.kernel.org/pub/scm/linux/kernel/git/stable/linux.git

- Stable Kernel release candidate repository: https://git.kernel.org/pub/scm/linux/kernel/git/stable/linux-stable-rc.git/

- Real Time Kernel repository: https://git.kernel.org/pub/scm/linux/kernel/git/rt/linux-rt-devel.git